|

| |

|

| |

|

|

|

|

TCHS 4O 2000 [4o's nonsense] alvinny [2] - csq - edchong jenming - joseph - law meepok - mingqi - pea pengkian [2] - qwergopot - woof xinghao - zhengyu HCJC 01S60 [understated sixzero] andy - edwin - jack jiaqi - peter - rex serena SAF 21SA khenghui - jiaming - jinrui [2] ritchie - vicknesh - zhenhao Others Lwei [2] - shaowei - website links - Alien Loves Predator BloggerSG Cute Overload! Cyanide and Happiness Daily Bunny Hamleto Hattrick Magic: The Gathering The Onion The Order of the Stick Perry Bible Fellowship PvP Online Soccernet Sluggy Freelance The Students' Sketchpad Talk Rock Talking Cock.com Tom the Dancing Bug Wikipedia Wulffmorgenthaler |

|

bert's blog v1.21 Powered by glolg Programmed with Perl 5.6.1 on Apache/1.3.27 (Red Hat Linux) best viewed at 1024 x 768 resolution on Internet Explorer 6.0+ or Mozilla Firefox 1.5+ entry views: 1530 today's page views: 2243 (70 mobile) all-time page views: 3797124 most viewed entry: 18739 views most commented entry: 14 comments number of entries: 1271 page created Fri Jun 5, 2026 21:06:52 |

|

- tagcloud - academics [70] art [8] changelog [49] current events [36] cute stuff [12] gaming [11] music [8] outings [16] philosophy [10] poetry [4] programming [15] rants [5] reviews [8] sport [37] travel [19] work [3] miscellaneous [75] |

|

- category tags - academics art changelog current events cute stuff gaming miscellaneous music outings philosophy poetry programming rants reviews sport travel work tags in total: 386 |

| ||

|

CNY again, and the annual influx of questionable picture greetings on WhatsApp, with this year's special activity being potted plant selection, and one of my worst-ever Dai Dee streaks (which eventually ended). Oh, and the new Gigabyte GTX 960 (Mini ITX version) arrived in the mail (and, at the recommended S$272 plus free shipping, over fifty bucks cheaper than from Sim Lim! How to support them liddat?), replacing the old GTX 460 (which I'm suspecting was contributing to heat buildup in the case). Frankly, for all the improved specs, I'm not experiencing too much of a difference, but it does seem to boost compute ability some. Ah, and as of Thursday, cholesterol's no longer a problem, according to American health experts, right on time to wolf down bak kwa completely guilt-free. See, things always tend to work out in the end. Not Merely Examined "They have multiplied." said the biologist. "Oh no, an error in measurement." the physicist sighed. "If exactly one person enters the building now, it will be empty again!" the mathematician concluded. Recently got wind that the university will be starting a new module on Quantitative Reasoning (which Yale-NUS appears to have gotten a headstart on). On one hand, I suppose it could sound rather superfluous - you can deduce... with numbers! And past evidence! What a revelation! - but then, I gather that one might be quite surprised at how novel the concept might be, even to college students. Not that multiplication alone is all that trivial, to what I'd suppose is a not-insignificant subset of that population, with the mainstream media going wild over a boom in fertility rates... to 1.25. Well, baby steps. And let's be honest, the local environment was never all that conducive to the in-house production of the next generation of worker drones.  Let's face it, it's gunna come down to this. (Source: weknowmemes.com) Updating last month's critique on local labour woes, The State's Times has continued their drilling in of the "manpower is the issue" mantra (with food and rental costs secondary), but for once, their carefully-selected published stats have already cast doubt on the party line to begin with:

Now, even under the generous assumption that our cost of living is about comparable to all the other countries/regions above, when it would probably be more realistic that plenty of smaller cities/towns in the US/UK/AUS would be rather cheaper to live in, one can clearly observe that waiters (and other manual trades) are already being paid horrifically little, with savings on supposedly low taxes unlikely to make up for even a 36% deficit, much less 70-over percent ones! Frankly, if restaurants are still struggling to survive despite paying so significantly under par for staff, the most obvious reasons would be:

Guess which of these I'm betting on? So, on to a review of one of my more recent reads, seasoned with all the sidetracking you've come to expect from here; have a cuppa (it's good for you, for now), and we're off:  (Source: Amazon) Making The Rounds We begin off-tangent, with an update on Deflategate. It's died down somewhat after the Patriots won the Super Bowl on the back of a scarcely less hotly-debated call, which in Singlish might be best described as "kiang jiu ho, mai gei kiang" (being smart is enough, don't act smart). With time running out, one yard to securing a practically-unassailable lead, and with one of the best guys in the game at securing that single yard with near-zero risk of losing possession in their service, the opposition Seahawks elected to... fling the ball into a crowd. Sure, it could have worked... but it didn't, and so the head coach who made the decision (rightly) took the stick. Given that it seems unlikely that the championship can be withdrawn even if it were proven that the balls were underinflated, the near-impossibility of proving beyond reasonable doubt that any underinflation was systematic (sans whistleblower), and not-uncommon suspicions that most teams have their own small "special treatments" going on in the background, Deflategate seems destined to be an academic curiosity. Which is not to belittle (semi-)academic curiosity; early statistical treatments of the Patriots' fumble rates have come under unrelenting attack by competing analysts (many of whom, for some reason, felt it necessary to clarify whether they were Patriot fans, either way) Among these, one of the most popular rebuttals has come from a U Chicago PhD data scientist (disclaimer: lifelong Patriot fan), who claims that Sharp's original take has cherry-picked data and exaggerated estimates, while linking to supporting sources. Sharp's response has been to stick to his guns about the Patriots hugely improving after teams began supplying their own balls in 2007, while defending his choice of multi-year aggregate stats as essential due to the very low number of games (16, in the regular season) per year. The controversy raged from more direct angles too. On the question of whether a couple less pounds per square inch of air pressure would actually materially help receivers catch the ball, some (including a former quarterback) asserted in the affirmative, particularly in colder, wetter conditions. Popular Science however commissioned a computer physics simulation, which demonstrated that the additional give would be barely a millimetre, surely not enough to matter. And as to whether natural conditions could have produced the drop in pressure in the first place, after early (some bungled) analysis by famous science popularizers, a graduate student from CMU (disclaimer: Patriot fan) has done the grunt work with actual footballs, and reported that the loss of pressure could have occurred without external influence - not that this has made much of an impression on the rest of America. [N.B. And, lest one gets the impression that only the NFL is so ballsy, it turns out that basketball had the jump on them, from the Seventies; they're also putting part of Tiger Woods' past dominance down to his solid balls, though in this case it was not a question of how big it was, but how well he used it.] Still not a whisper from Columbia University, however, which is where we return to My Life As A Quant (wait, are we still doing the book review?!) Innocent Beginnings The book is Derman's autobiograpy, in which he recounts his career, split almost exactly evenly in halves. Chapters one through eight describe the first twenty years of his professional life as a physicist, spanning Columbia, UPenn, Oxford, Rockefeller, Colorado and then Bell Labs. Chapters nine through sixteen move on to his next twenty years, spent in finance, where he made the rounds of Goldman Sachs and Saloman Brothers, before retiring (as of 2004, the year of publication) back to Columbia to teach financial engineering. Derman was almost the archetype of a starry-eyed graduate student - after excelling in applied mathematics at his native (and admittedly not-super-up-to-date) University of Cape Town in South Africa and graduating at twenty, he was drawn by the desire for scientific immortality to the legendary physics department of Columbia, chock-full with past and future Nobel prizewinners in what was then indisputably the premier field for aspiring academic stars to be. Despite being extremely clever, as his future accomplishments would bear out, Derman was not immune to expectation decay - as he said himself: "at age 16 or 17, I had wanted to be another Einstein; at 21, I would have been happy to be another Feynman; at 24, a future T.D. Lee would have sufficed. By 1976, sharing an office with other postdoctoral researchers at Oxford, I realized that I had reached the point where I merely envied the postdoc in the office next door because he had been invited to give a seminar in France..."  It happens. (Source: phdcomics.com) He also quickly learnt that graduate school was a paradise of sorts... if you didn't mind staying indefinitely. He got out in seven years, one of his friends in ten, and many others (historically, almost half) never made it in the end [N.B. If I had to (presumptuously) give just one piece of advice to a new doctoral student, it would be: get (the right) advisor and some project to work on, as soon as possible]; as a theorist, he was further disadvantaged, as there was relatively little lower-level "busywork" he could assist in. Having accepted that he would not be one of the fast-track wunderkind, Derman set about snagging himself an advisor, and after a failed courtship (academic relationships can span all the way from an intimate melding of minds - Derman covers T.D. Lee's acrimonious breakup with C.N. Yang, and the insularity among Lee's returning disciples - to the breathtakingly distant ["Oh, you're my student? What's your name again?"]; one of his friends spent half a year toiling away on a thesis problem by himself, before discovering that his advisor had already solved it, when they next met), he became the first PhD student of one of Lee's proteges. Show Me The Proof! And a timeout, for a diversion. We consider the question: theory or data? Further expressed with related terms:

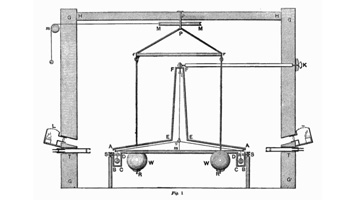

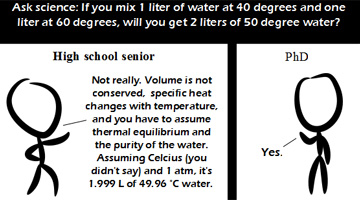

Well, the obvious answer is that we need both, for "practice without theory is blind, but theory without practice is sterile." (and guess who said that?). Definitely, plenty of the terms in the above table can plausibly belong in the other cell (and frankly, almost everyone uses at least a bit of both), but you get the general drift. That said, the rift is real - being an expert in both theory and experiment at the top levels is so rare, that Derman states that Fermi, whom he holds to be the spiritual father of the Columbia physics department, was one of the last to truly excel at both. For Derman himself, it wasn't much of a choice: "...The essence of theoretical physics is the attempt to look at the universe, and then mentally apprehend its structure. If you are right, you emulate Newton and Einstein: You find one of the Ten Commandments... This was the struggle to which I aspired. Anything else would have been a compromise that I was not prepared to make..." Of course, one can imagine that the ongoing "Big Data" hype is a form of pushback to this worldview. Collect enough information, so the narrative goes, and you can discover truths heretofore thought impossible... or even imagined!  Some fortunate ones were born with the raw tools; Others, they have just got to make do with "optional extras" (Source: motifake.com) Cribbing from Brock's American Gridlock for a spiel against "data crunching", and for good old-fashioned logic: "Most people... incorrectly assume that, with enough data, scientists can 'crunch' their way to the truth. In reality, data almost always underdetermine the truth, and that partly because of this reason, inductive logic alone has led to the discovery of very few important scientific truths..." Brock cites Euclid's geometric axioms, Nash's equilibria, Einstein's general relativity and Shannon's information theory, among others, as the fruit of deduction from first principles. Induction - data - is in Brock's opinion almost an afterthought, to be used for testing and falsification after the theory has been described, and he bemoans the inversion of emphasis - data first, theory second - becoming common in many disciplines. The remainder of his book is an attempt to resolve five largely economical/political "big challenges" through pristine deductive logic, instead of partisan statistic-twisting (note: China's currency manipulation remains an axiom) A Very Simple Opinion On theory versus data, my current take remains that the divide should come down to a single key consideration: is there actually a "universal truth" in the domain? Hopping back to Derman, this is a major source of tension between his disciplines of physics and finance - in the former, there is The Law, which applies completely impartially to everyone. If you conceptualize gravity right, then whether it is apples or light, it is all the same.  Or balls. Balls *always* work (Source: wikipedia.org) Whereas in finance, politics, or even biology, it is an open question as to whether a universal truth even exists. In these cases, it is easy to understand the lure of data-driven analysis - practitioners may despair of ever unraveling all the entwined real-world variables, but they still want a plausible solution to their particular case. Further, physics has the advantage of more easily being separated from its application. If an artillery unit is wiped out by an enemy, army scientists tend not to be accused of "wrong physics". Lose money on the market, however, and quants can get hammered for "bad models". In any case, it is certainly true that mountains of data, by itself, is often not very useful. Recalling the dabbling with Eureqa some years back, one notes that one still has to set the variables to mine relationships between, and that selecting these variables is often the toughest part. While the point of Eureqa and similar software is that it can automatically generate additional hypotheses (e.g. if your data does not have elapsed time E, but does have start and end time columns s and e, it should eventually generate E as e - s), one quickly realises that the set of hypotheses, even under just the elementary relations (say, addition and multiplication), explodes spectacularly. Additionally, some concepts, like primes, would simply not be constructible. It can be interesting to consider a "pure" data-based approach to physics. Using the example of F = ma: instead of using any two of the variables to produce the third, our pure data-ists would, given say m=3.72 and a=9.81, instead turn to their ginormous collection of past observations. Of course, there remain many possible approaches at this stage - how to interpolate between conflicting observations, for one - but the point is, it could actually work. The major limitations are then firstly that there is no actual "understanding" - without extra considerations, cases like throwing a feather cannot be accounted for properly (though this is true for the equation too, the whole process of distillation makes reasoning viable). Secondly, there is the problem of what happens in ranges with no data, or biased data (e.g. all instances with a>1000 have only been collected from space); how can this data be adapted? Still, the whole point is that so much of classical physics has for some reason been so... elegant. Concise. Perhaps part of this is down to a natural monotonicity - if A and B interact for some effect, and increasing A increases the effect, one expects continuing to increase A to generally continue to increase the effect, possibly subject to diminishing returns. This is often, in practice, not the case once those bothersome humans become involved.  And few more so, than the eternal pedant. (Source: comicjk.com) But Back To The Show Before we get too lost, let's return to Derman. His doctoral work in particle physics was certainly deductive, described as it was as trying to figure out a whole song, from hearing a few isolated bars. Along the way, he acquired a wife (a fellow physics grad student, who later turned to molecular biology), and the two of them would later find out just how intractable the two-body problem was - Derman would spend years at a stretch away from his young son. It is worth a note that even in the 1970s, with science funding still powered by the Space Race and the Cold War, many talented PhDs still found themselves in postdoctoral hell, moving from one place to another as an itinerant scholar. Some even became "freebies", who got a desk and maybe access to basic research facilities at some university, but nothing else. A love for the subject could get one only so far, and two years into a less-than-happy stay at Penn, Derman was seriously considering leaving it all behind. His passion was rekindled by new experimental data from CERN that was closely related to his thesis topic, and resulted in an analysis that snagged him an offer from Oxford. Now, readers with good memories will recall that he was soon envying the next-door postdoc. Anyhow, he described the venerable British institution as proudly old-fashioned and overwhelmingly courteous, but also stuffily bureaucratic, with the English in general comparatively xenophobic. With newborn son in tow, the Dermans returned to the States, where he landed his next gig at the research-only Rockefeller University, where he felt "like a philosopher-king". Unfortunately, he quickly fell out with his supervisor, which he suspected was partially due to not deferring to him on non-physics subjects. Flyers for other positions began appearing in his pigeonhole, and Derman began seriously considering converting to become a medical doctor. Next stop was the University of Colorado at Boulder, but this meant that he would be separated from his family, given that his wife's New York-based career was showing more promise than his. There, he dabbled with Buddhist meditation, but this would be the end of the road for him, physics-wise. By 1980, Derman hung up his academic career, and joined AT&T's Bell Labs (which, as it happens, did quite a bit of machine learning) [To be continued...] Next: Second Half-Life

|

|||||||||||||||||||||||||||||

Copyright © 2006-2026 GLYS. All Rights Reserved. |

|||||||||||||||||||||||||||||